环境介绍

OS:rhel7.2

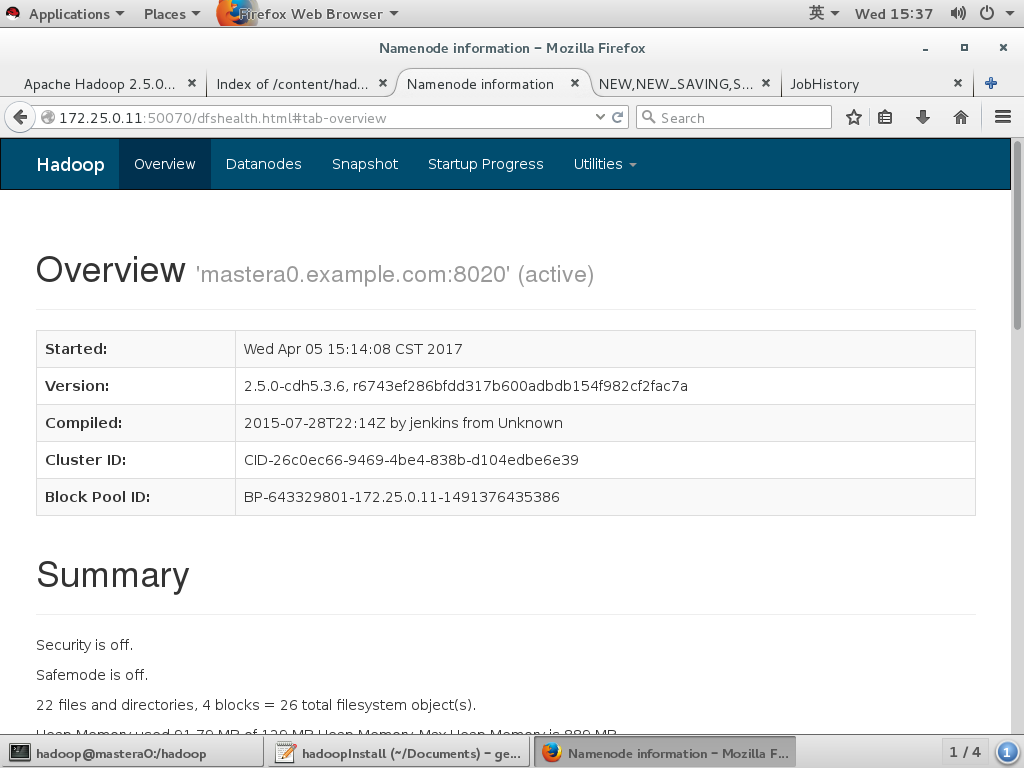

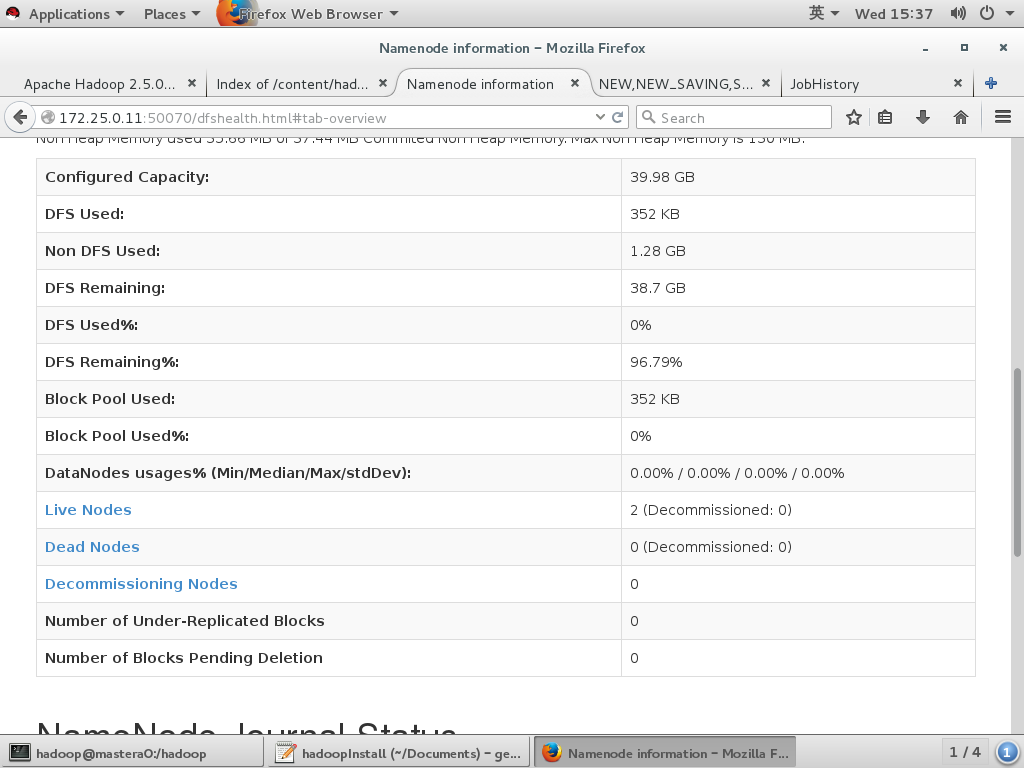

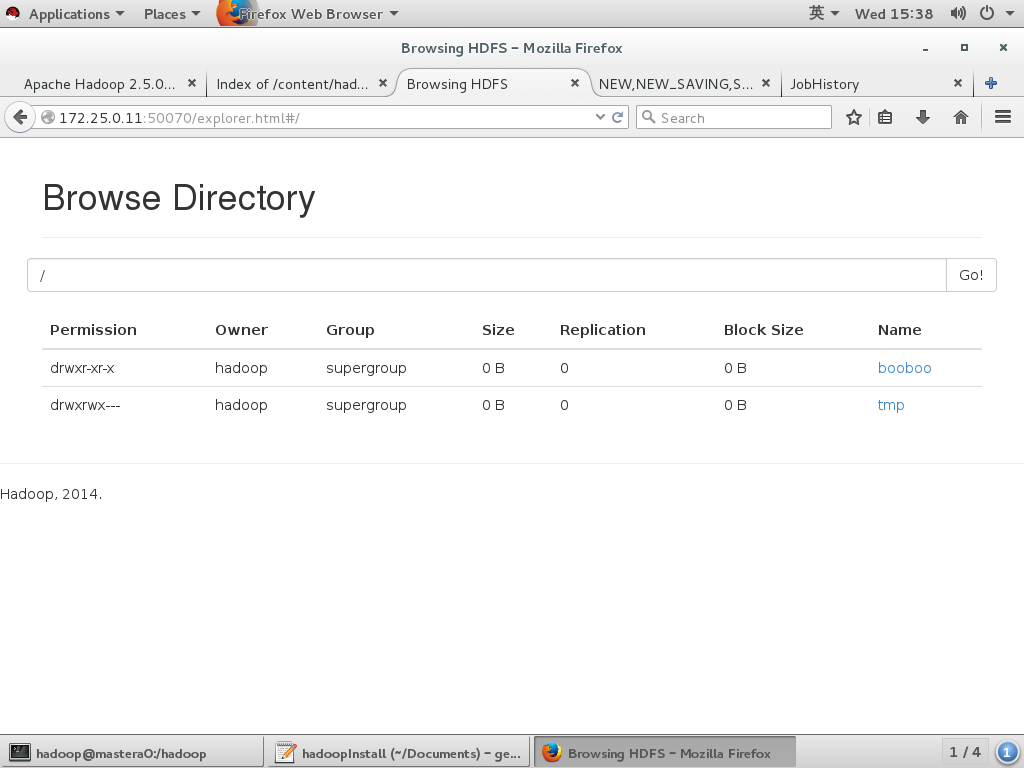

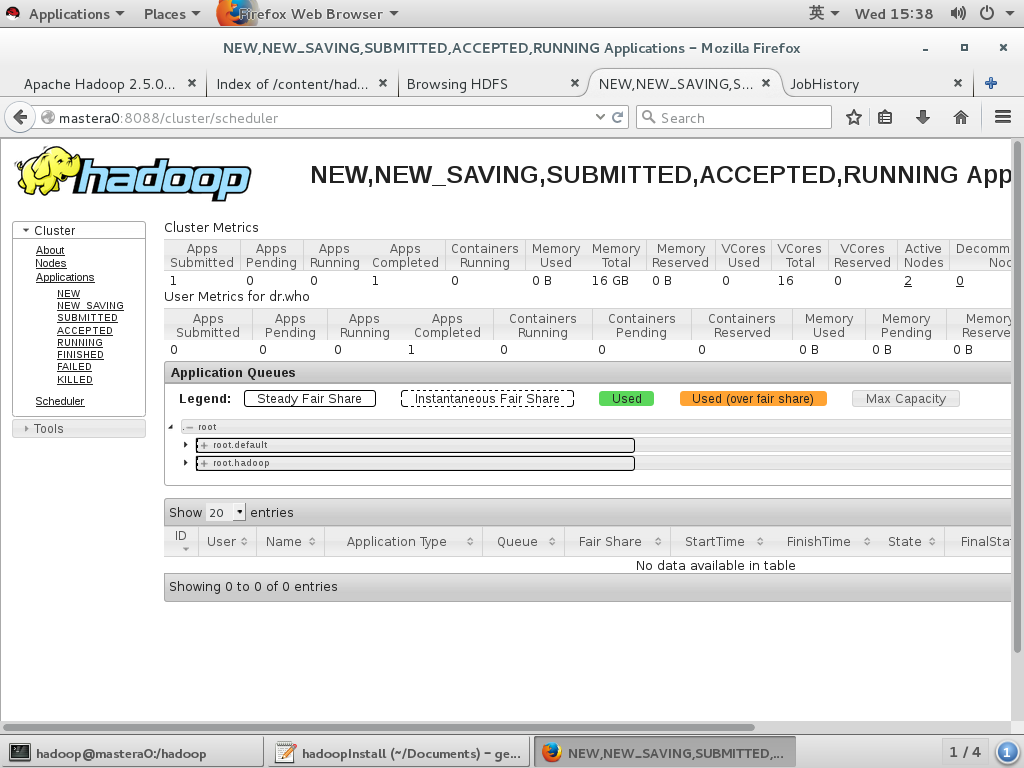

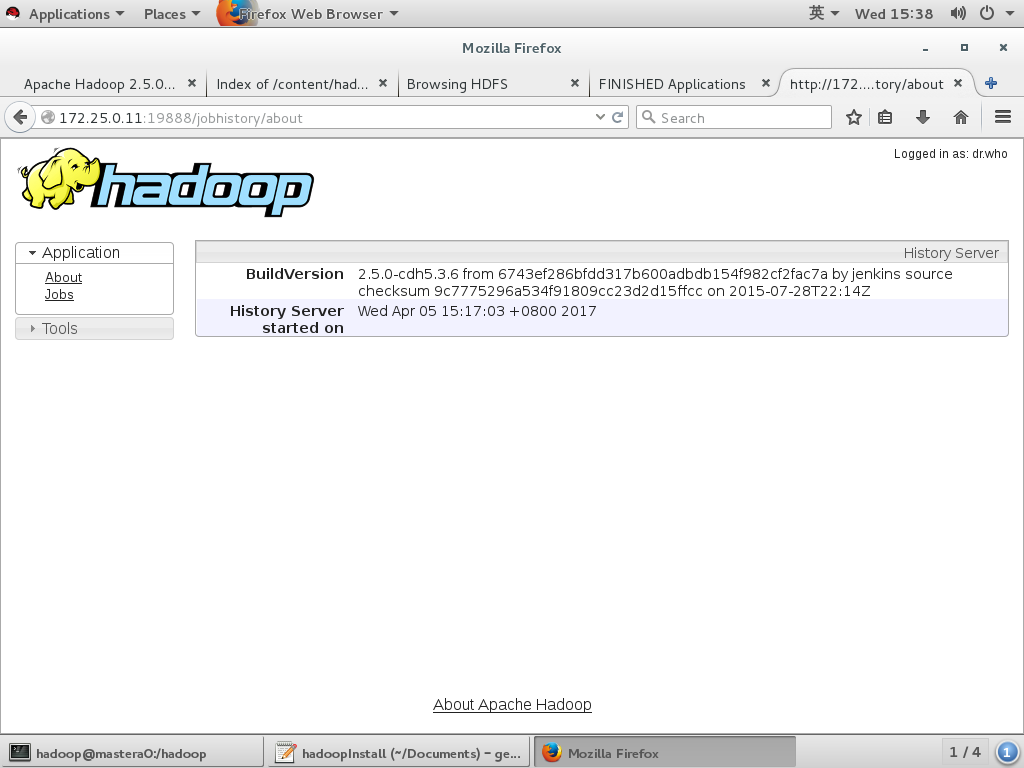

mastera 172.25.0.11 nameserver(8020 50070) resourcemanager(8032 8030 8088 8031 8033 )jobhistory(10020 19888)

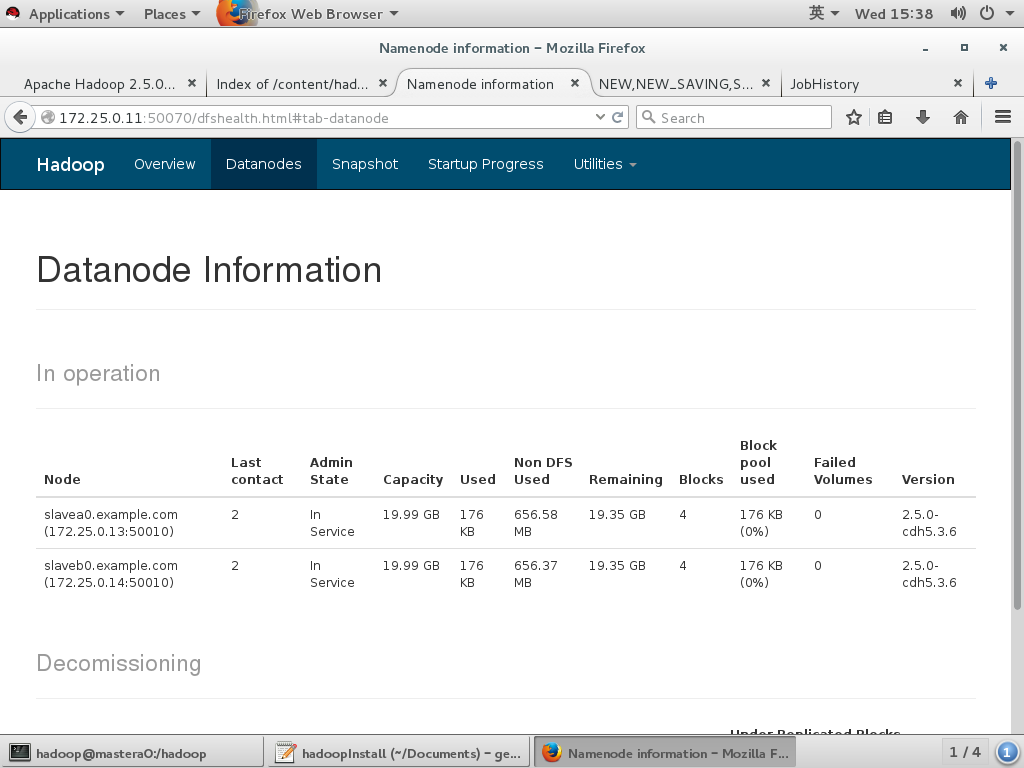

slavea 172.25.0.13 datanode nodemanager

slaveb 172.25.0.14 datanode nodemanager

初始化环境脚本

!/bin/bash |

Install JDK & Hadoop

!/bin/bash |

Hadoop配置文件

- core-site.xml模板 share/doc/hadoop-project-dist/hadoop-common/core-default.xml

- hdfs-site.xml模板 share/doc/hadoop-project-dist/hadoop-hdfs/hdfs-default.xml

- yarn-site.xml模板 share/doc/hadoop-yarn/hadoop-yarn-common/yarn-default.xml

- mapred-site.xml模板 share/doc/hadoop-mapreduce-client/hadoop-mapreduce-client-core/mapred-default.xml

列出核心内容

B2(){ |

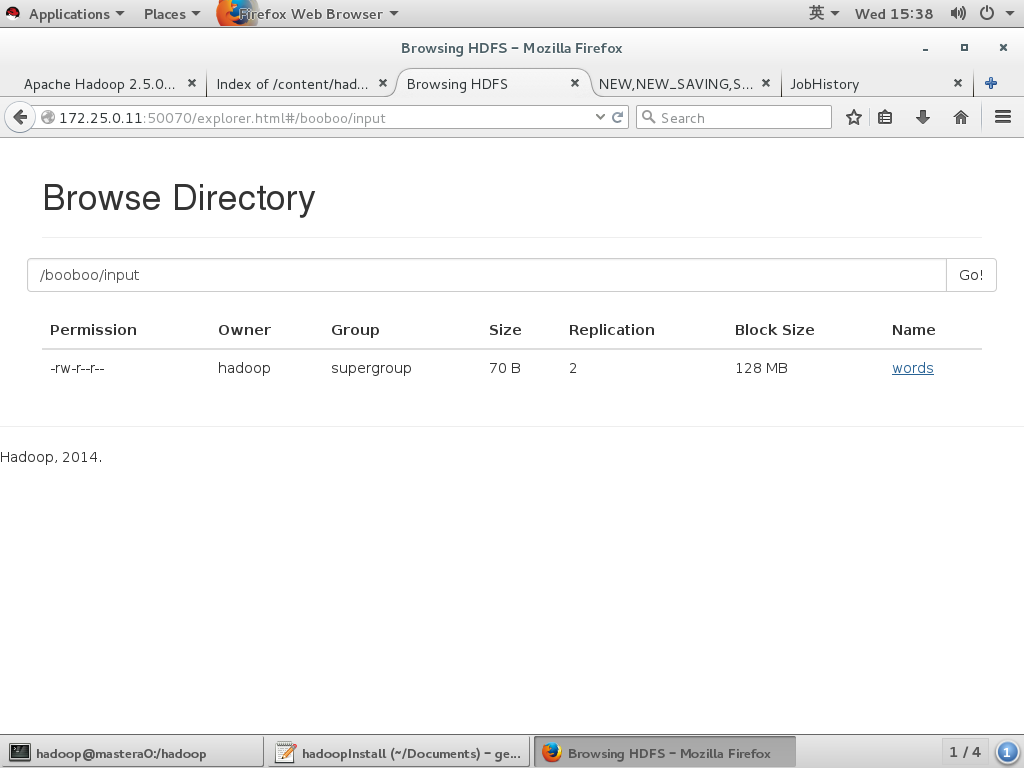

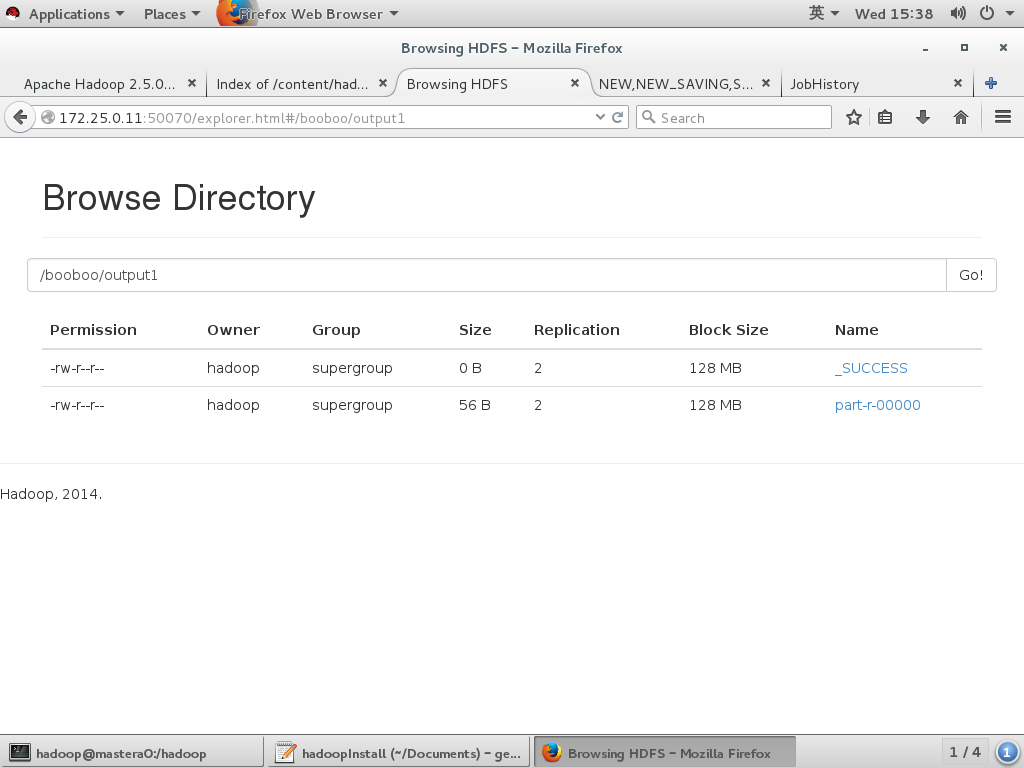

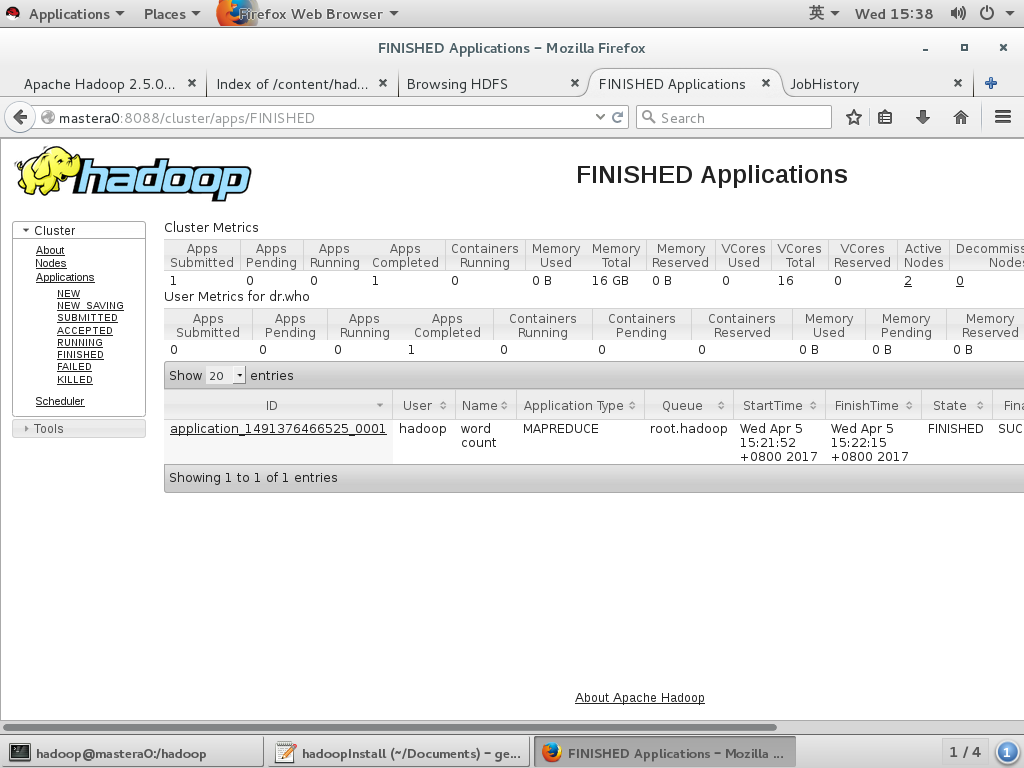

wordcount 测试

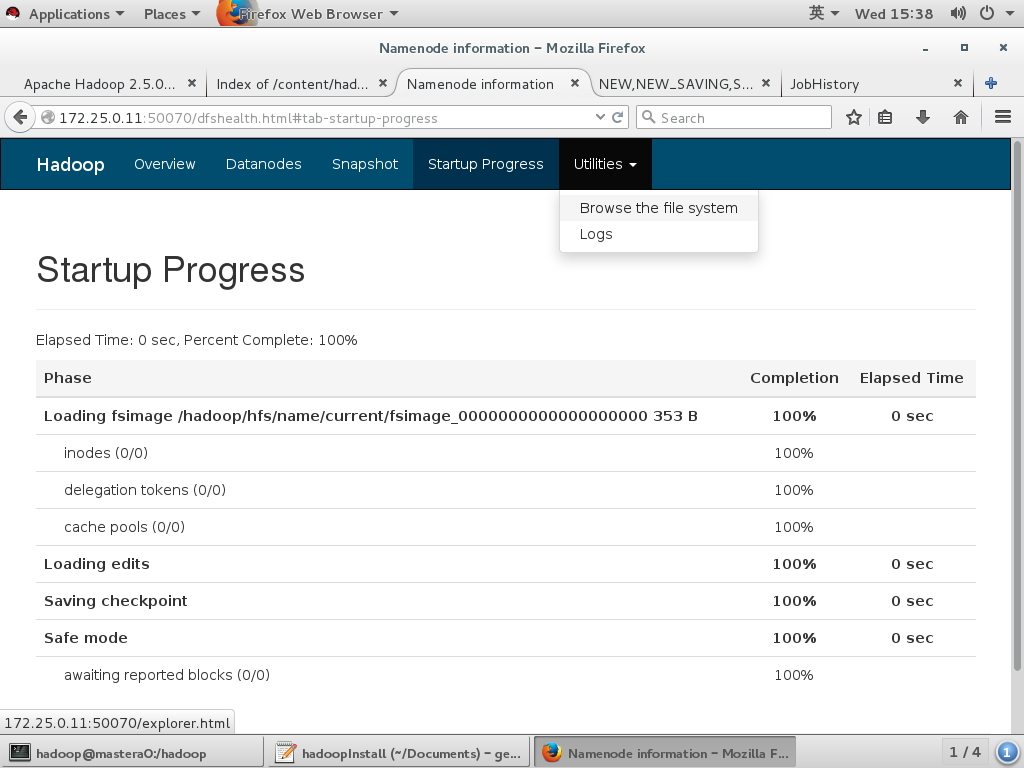

格式化namenode |

详细操作留档

[hadoop@mastera0 ~]$ hdfs namenode -format |